The Funnel Didn’t Break. It Just Moved Somewhere You’re Not Measuring.

If you’ve been in digital long enough, you’ve survived a few “everything changes now” moments. Mobile. GDPR. iOS 14. GA4. GO sunset. Each one came with its own wave of LinkedIn hot takes and panic, and, each time, the craft (mostly) held. We adapted. We moved on.

Today feels different. And I say that as someone who’s been burned before by that sentence.

Some recent research dropped, all pointing to the same structural shift. And the implications are so significant that we (anyone who optimizes websites, tests experiences, or tries to understand why users behave as they do) we need to talk about them seriously. Ready?

(No? I know. Me either. Le sigh. 😮💨)

What’s Actually Happening

Here’s the short version: the place where buying decisions get made has moved. It used to be on your website. (You know, where we spend time making those beautiful, perfect experiences? The optimal journeys?)

Now… It’s inside an AI chat window or AI search result. Before your visitor ever clicks through to your carefully curated experience and journey.

In February 2026, G2 surveyed over 1,000 B2B software buyers and found that 51% start their software research with an AI chatbot. Not as a supplement. (Are you paying attention? 51%. That’s more than half who STARTED with Google!!) And 71% rely on AI chatbots somewhere in the research process, up from roughly 60% just seven months earlier.

Meanwhile, Adobe Analytics published data showing AI-referred traffic to US retailers grew 393% year-over-year in Q1 2026. That number peaked at 1,151% YoY in December during holiday shopping. 🤯

These aren’t niche early-adopter behaviors anymore. This is mainstream.

The Conversion Flip Few Noticed

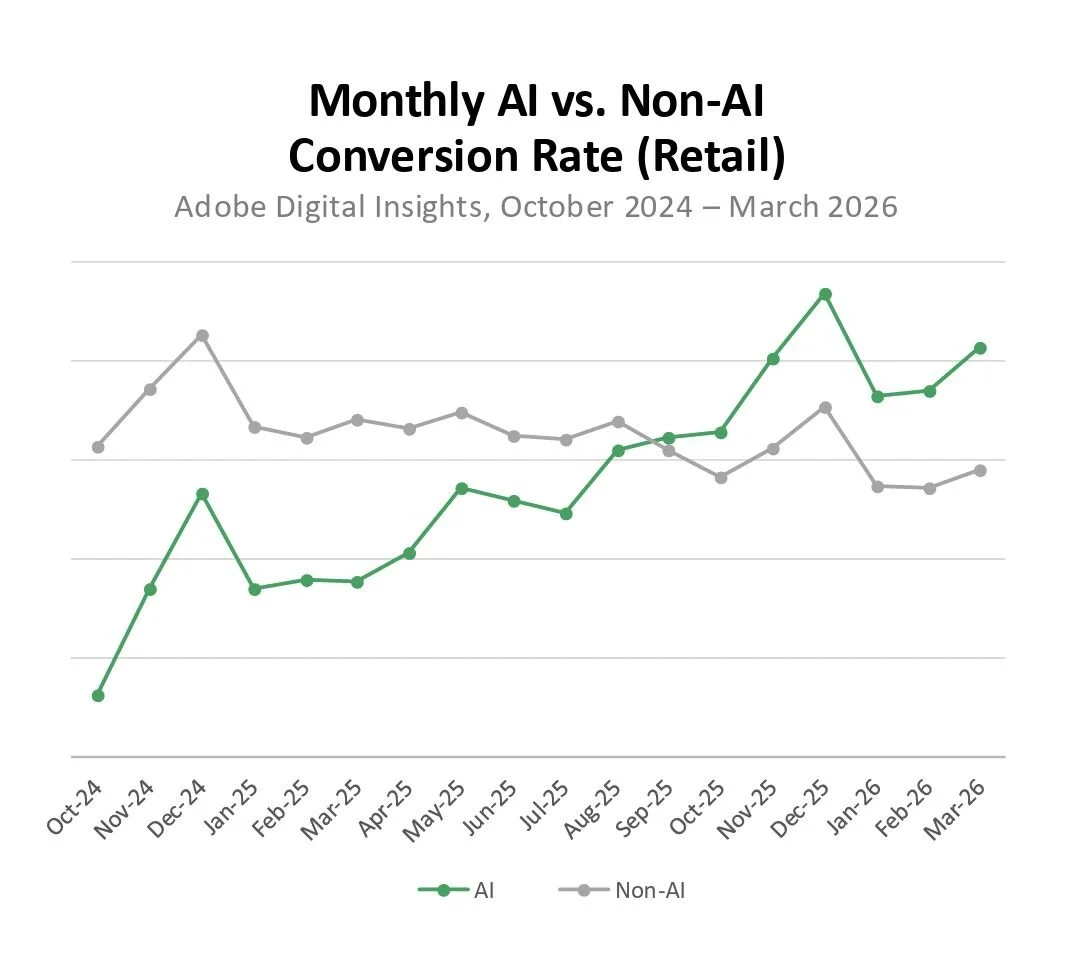

Twelve months ago, AI-referred visitors converted at roughly half the rate of other traffic sources. Today, per Adobe’s data, analyzed by Sani, AI-referred traffic converts 42% better than non-AI traffic.

Same channel. Same stores. Completely different story.

Look at the chart Sani shared in his LinkedIn post (blame Adobe for the visuals). The AI-referred traffic line starts at the bottom in October 2024. By late 2025, it crosses non-AI traffic, then just keeps climbing.

This isn’t gradual maturation. Sani puts it bluntly: paid search matured slowly. Mobile matured slowly. Social matured slowly. AI-referred traffic didn’t do that. Two measurement checkpoints, twelve months apart, sign flipped. Anyone working from a “let’s learn what works over the next year” AI strategy playbook is working from a brief that’s a year out of date.

Accompanying this flip: engagement up 12%, time spent up 48%, pages per visit up 13%, revenue per visit up 37%. All versus non-AI traffic.

The click to your website, app, or product is now the last step of a decision, not the first.

Why? Because by the time someone clicks through from ChatGPT, Claude, or Gemini, they’re not arriving to browse. They already compared options, asked follow-up questions, and narrowed their shortlist inside the AI.

What This Does to Your Funnel

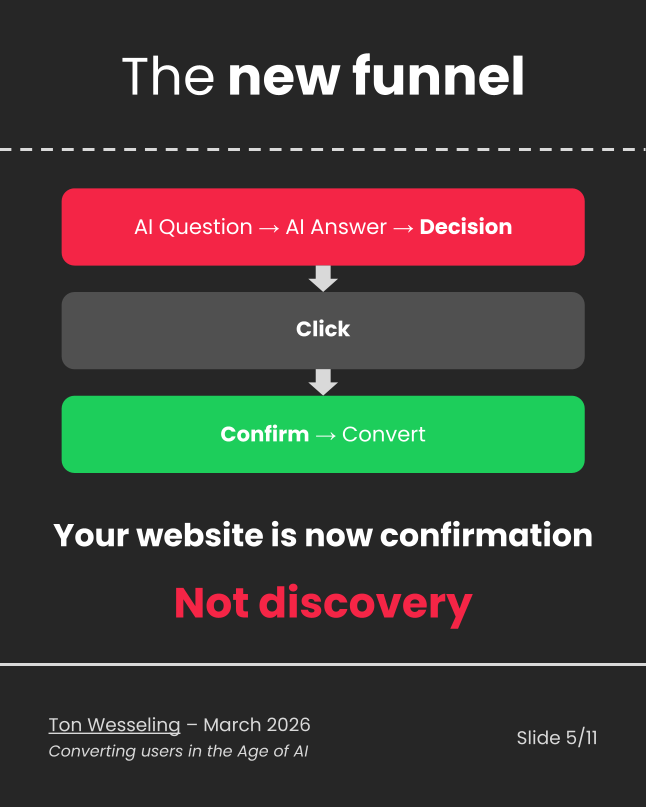

Ton Wesseling framed this perfectly: we’ve moved from a website-as-discovery model to a website-as-confirmation model.

Credit: Ton Wesseling from his LinkedIn post on this topic

In the old world, your website did the educating. Users arrived curious and left (hopefully) convinced. Your job was to guide them through a journey.

In this brave new world, the AI does the educating. Users arrive pre-briefed, pre-convinced, and significantly less patient. If your site doesn’t immediately confirm what the AI told them: same pricing, same benefits, same framing; trust breaks instantly. And when trust breaks, conversion drops.

The G2 data backs this up from the demand side. Buyers aren’t using AI to orient themselves in a new category. The #1 use case is comparing vendor strengths and weaknesses: Already-informed buyers, using AI as a synthesis engine to make a call. And 8 in 10 say AI accelerated their purchasing decision. Brand loyalty? Name recognition? Forget it. According to the report, little-known vendors that are doing a better job getting seen by AI are getting the deals.

Here’s what that means for your optimization practice:

Your classic top-of-funnel metrics (sessions, page views, scroll depth, time on site) are going to look broken. Shorter sessions, higher conversions, less scroll, more revenue per visit: This isn’t your site getting better. It’s your visitor arriving further down the funnel, because an AI handled the middle part of the consideration cycle.

Ton’s warning is worth sitting with: your new CRO priority is consistency and trust, not persuasion. The visitor isn’t coming to be convinced. They’re coming to confirm. If anything on your site contradicts what the AI said, you lose them—fast. Consistency = trust = conversions in the new world.

AI is Now the Shortlist Generator

The G2 report, The Answer Economy, found that AI chatbots are now the #1 source influencing which vendors make buyer shortlists, ahead of review sites, market research firms, vendor websites, and peers.

And 69% of buyers chose a different vendor than initially planned because of AI guidance. One third bought from a vendor they’d never heard of before their AI conversation.

Read that again. One third of buyers were converted to a brand they Did. Not. Know. Existed. Because of an AI answer. That’s not a minor channel effect. That’s a discovery mechanism with the power to create new market entrants overnight and erase established players from consideration sets before a single sales call happens.

For B2B folks specifically: 85% think more highly of a vendor cited by an AI in its answer. The citation itself is now a trust signal.

And buyers aren’t asking softball questions. Two-thirds open with category or competitor-based prompts - straight into evaluation mode. They’re not easing in. They want a shortlist, fast.

New Confidence Signals

Here’s where it gets interesting for brands - and where experimenters might want to consider a new focus…

The G2 data shows that 45% of buyers say a citation from a review site is the most confidence-inspiring signal in an AI answer. Among daily power users of AI (the most sophisticated segment), that number rises to 50%.

Why does this matter? Because review platforms are a major input to the LLMs generating those answers. Reviews don’t just influence buyers directly anymore. They train the model’s perception of your brand. A thin review presence means the AI has less to synthesize, leading to a weaker answer (or no mention at all).

Here’s the part most people skim past: 64% of buyers encounter AI inaccuracies frequently (weekly or several times a month). When that happens, the most common response (24%) is to seek out peer reviews for verification. Review sites are the gut-check layer when AI gets something wrong.

And review sites are the only source besides AI chatbots that gains influence as buyers move deeper into the funnel. They’re more critical at the decision stage than at discovery. That’s not a top-of-funnel play. That’s infrastructure across the entire journey.AI combs through the reviews to find your website. Reviews are no longer about marketing; they’re not for people. Now, they’re foundational components for AI to be able to select and surface your website. And if the AI gets it wrong, the review sites are where real people go to verify their decision-making. So there is no more important location for your brand to be present, and get it right.

The Legibility Problem Nobody’s Measuring

Sani’s analysis of the Adobe data reveals something that deserves much more attention: the 393% growth figure is an average dragged down by sites that AI can’t read.

Adobe looked at Citation Readability (how well a page can actually be parsed and cited by AI systems).

Top retailers score 62% higher than bottom retailers on their homepages

32% higher on search results pages

30% higher on editorial content

The retailers winning the growth numbers are the ones AI can actually reference. Everyone else is pulling the average down. Which means the real conversion lift on machine-readable sites is likely much higher than 42%.

And here’s the brutal part: most website owners have no idea their site isn’t readable to AI crawlers. Their dashboards don’t show when GPTBot fetched a shell. Their session recordings don’t capture bots. Their attribution rarely tags AI referrals cleanly.

Sani’s two-step diagnosis you can run this weekend to find out what AI crawlers can read:

Disable JavaScript. Reload your product or landing page. Does the price render? The product name? The CTA? Most AI crawlers don’t execute JavaScript. If your critical facts need JS to appear, the AI can’t cite them.

Without JavaScript enabled, check what leads. Does your page open with relevant answers about your products? Can you find what you’re looking for immediately? Does it explain your products, their costs, and their availability? Or are you forced to use navigation, search, and click-to-expand elements to get there? AI indexers don’t scroll through brand theater to find the facts. Humans tolerate it. AI doesn’t.

“AI-referred traffic doesn’t reward optimization. It rewards legibility. Those are not the same thing.” — Sani Manic

What This Means for the Testing Crowd Specifically

If you’ve read this far, you’re probably already connecting dots to your own practice. Here’s the TL;DR to reward you:

Your pre/post traffic analysis is measuring survivors. Higher CVR, shorter sessions, lower bounce; that looks like winning. But you’re only seeing the users who made it through the AI filter. The ones the model routed elsewhere, or misrepresented, or never surfaced to you at all? They don’t show up in your analytics. You don’t know what you lost.

Your experiment audience is pre-qualified in ways you didn’t control. If you’re running an A/B test and your AI-referred segment is growing, those visitors are arriving with more context and stronger intent than your traditional traffic. That shifts your baseline. It affects your power calculations. It means conversion rates on test variants may look different, not because your variant is better, but because your audience mix changed.

Consistency is now a testable hypothesis. Ton’s framing of “confirmation not discovery” suggests something we can actually act on: does aligning your key entry pages’ messaging with the language AI uses to describe you improve conversion among AI-referred visitors? Those are tests worth running.

Your KPIs from two years ago may be lying to you. This isn’t “traffic is declining, panic.” It’s “traffic composition changed, and your old benchmarks don’t account for that.” Lower scroll depth + higher conversion isn’t a contradiction. It’s a signal that your visitor arrived further down the funnel than they used to.

My $0.02: THE LAST THING YOU WANT IS TO BE INVISIBLE TO AI.

The shift from “search” to “answer” as the primary discovery mechanism is not a future problem. It’s here. The funnel didn’t disappear. It just moved. The journey we’ve spent most of our careers optimizing has shifted to locations most of us will now have to figure out how to influence. Most of it now happens inside a chat window, before your visitor ever sees your site, app, or product.

For optimizers, this creates a new upstream layer we’ve never had to think about before. You’re not just optimizing the experience for the human who arrives. You’re increasingly optimizing for how accurately and favorably an LLM represents you. That is what will determine who arrives in the first place.

The good news: the skills transfer. We understand how to observe, test, and interpret behavior. We’re trained to question what metrics actually mean. We’re comfortable with the idea that the thing you measure isn’t always the thing you care about.

The hard part is accepting that some of the most important conversion decisions now happen in a place we can’t directly instrument. That’s uncomfortable. It should be.

But that’s exactly the kind of problem that experimentation people (people who’ve spent careers making sense of noisy, incomplete behavioral data) are built to work on.

Read the OG

All three sources are worth your time directly:

G2’s “The Answer Economy” Report: article

Sani Manić’s Adobe AI Traffic Analysis: LinkedIn Post & article

Ton Wesseling on CRO in the AI age: LinkedIn post

Got a take? Reach out! Now recruiting speakers for both the TLC and Experimentation island.